Welcome back to the vRad Technology Quest.

At vRad, we value our ability to deliver frequent, regular, and minimally interruptive releases and today I’ll provide an overview of the development pipeline we utilize to achieve those releases.

What is a development pipeline? The development pipeline is the chain of tools and processes that we utilize to take the code from a developer’s laptop, get it ready for prime-time, and ship it to production. Our development pipeline is a large part of the overall Software Development Life Cycle at vRad.

|

Software Development Life Cycle refers to the overall set of tasks for building, testing, deploying, and maintaining software. |

vRad’s Development Pipeline is “Continuous Test.”

If you are familiar with development pipelines you may have heard terms like “continuous integration”, “continuous deployment”, “continuous release”, and other phrases using the term “continuous”. Like other companies and teams, we place a high value on the “continuous” aspect of development – we often think of the pipeline as a conveyor belt in a factory – keeping it moving and on schedule is critical.

Our preferred term for our development pipeline is “Continuous Test” because testing is our primary focus through the pipeline.

|

Continuous Test refers to a model of running tests on a continual basis to ensure quality. |

vRad’s end goal in software development is to release high quality features to production in an efficient way. Our automation focus within the pipeline isn’t centered on releasing to production, but instead on constantly testing our changes. Our pipeline is inspired by other “continuous” pipeline models from other large-scale platforms including Etsy (https://codeascraft.com/2012/02/13/the-etsy-way/) and Netflix (http://techblog.netflix.com/2016/03/how-we-build-code-at-netflix.html).

Some steps we’ll explore here might not seem like traditional “tests”, but at vRad, our teams view the entire process as testing for production. This includes compiling the code, packaging the code, deploying the code, running automated tests, running manual validations, and over 75 other checklist items that make up an iteration of our SDLC (Software Development Life Cycle).

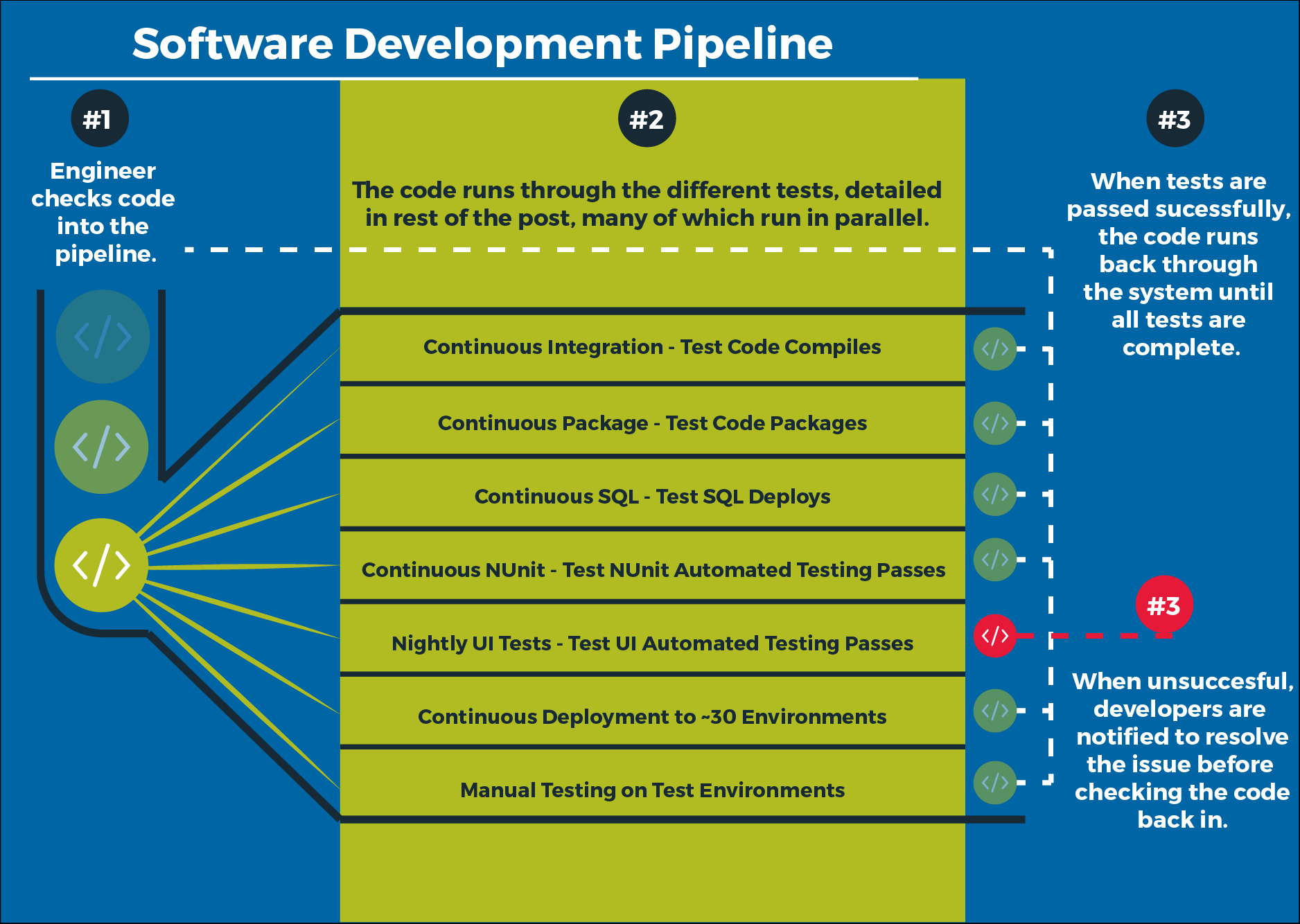

The below infographic illustrates the various branches of our pipeline – many of these steps are run in parallel with each other; we’ll talk about each type of test below.

Continuous Test Culture

Before diving into the details of individual test steps, I want to share a bit about the culture within the vRad engineering department.

When developing software, the starting point is a developer sitting at her computer; we believe that each developer should be empowered to run as much of the full pipeline from her computer as possible – or “locally” as we call it. We have over 100 different modules across 3 subsystems which are worked on by a mixture of our product teams. Each developer has the tooling and basic understanding to not only compile all of our applications, but the knowhow to perform a full packaging of our products, full deployment of all of our modules, and run our thousands of automated tests – all right from her laptop.

Once she checks the code into our source code repository, our Build Server (Atlassian Bamboo and BuildGuru) initiates a host of automated continual tests on our test infrastructure. Our testing infrastructure will notify a developer if she breaks any tests.

*Stay tuned for more on our Build Server in vRad’s Build Tools (#5) and our test infrastructure in vRad Test Environments (#3) and vRad Automated Testing (#4)*

While breaking a test will result in a high priority to fix the test, test breakage is not considered a bad thing. We encourage developers to make changes that move our platform forward – our pipeline is designed to catch the mistakes so developers don’t have to use a “fear driven development” methodology.

Build Tests

One of the first set of tests in our pipeline are build tests.

|

Build tests verify that code can be successfully compiled and packaged for deployment. |

An important part of the philosophy at vRad is to “build everything”. This means that each check-in triggers an entire system build – over 100 modules are built. The build takes approximately 20 minutes and results in a package that contains all 3 of our subsystems in full – over 5 GB of packaged software. This philosophy ensures that we always have the latest build of every module of our systems –changes are flushed through the system quickly and efficiently.

I’ll share more about our build tools in an upcoming article – stay tuned for vRad’s Build Tools (#5); one of the key aspects of our entire development pipeline is performance. Because we run all of these test steps continually, it’s important for engineers to get feedback right away. Compiling our entire platform used to take over 2 hours; we recognized this as a bottleneck and have reduced it to about 20 minutes over the last few years (to be specific, a raw compile of our platform takes only a few minutes and is done as a specific test step, the 20 minutes is a full package of the platform, which involves writing the applications and components in a particular way into a package to be deployed during later steps).

Once packaging is verified, we move to deployment tests to verify that we can successfully deploy the software.

Deployment Tests

Every hour we produce a new package from our “build test” and that package is immediately tested for deployment. The deployment utilizes the same metadata driven toolkit that the package is produced from, which helps simplify the system’s knowledge of the applications themselves.

vRad application deployment comes in various shapes and sizes – web applications, Microsoft ClickOnce based Windows applications, Windows service applications, SQL modules, and various others; each module has quite a bit of metadata including security information, configuration information, and about 15 other attributes, so having our builds and deployments from the same toolkit enables a lot of flexibility around all this metadata.

Once a build is packaged and deployed, that build is promoted for usage in other environments. This idea of ‘promotion’ is important for our pipeline because different environments can tolerate different levels of code. In a typical development phase, for example, it is acceptable for our package to transit through builds, deployments, and hours of various automated tests prior to being available (promoted) for usage by another environment. On the other hand, if a flaw is discovered at 2am and needs an immediate resolution, we can quickly build a single application and run a limited set of tests prior to releasing to a test environment – we build in the flexibility of skipping some of the promotion steps for urgent situations.

SQL Deployment Tests

While application deployments have pretty robust tooling and rely primarily on “file copy” technology, SQL deployments are a bit more complicated. For SQL Deployments, we check all of our SQL code into our source code repository in an idempotent fashion: meaning the SQL can be run repeatedly without error. For SQL deployments, we deploy all the idempotent SQL for a database.

Early on, we found that around 75% of our deployment failures were SQL related. To correct this, we added a parallel process step to help catch SQL related failures earlier on. While packaging is going on, we test deploying SQL to a DB only environment so that SQL failures provide feedback within 5 minutes instead of within 25 minutes (when waiting for packaging to complete).

Unit Tests

We have thousands of NUnit tests built into our code base. These test are built, packaged, and deployed in a similar way to our applications. A full package of our software includes the tests themselves.

Typically, the term “unit test” implies a fast, “code only” test that is small (thus the term “unit”). At vRad, we test in layers: our unit tests are comprised of traditional code-only unit tests, as well as NUnit driven tests that test the code and database layers. For automation purposes, we treat these two types of tests the same and refer to them as unit tests.

To run these tests, we deploy them to clients and execute them remotely, then collect and display the results in Bamboo. Any failures are then triaged by the developers associated with the changes.

Automated UI Tests

Finally, we also have automated UI tests using the Ranorex product. We have thousands of test cases built into our UI Test Suites and we execute them similarly to our unit tests – we deploy and execute them remotely.

Our Ranorex tests utilize a Page-Object design pattern that we’ve found to be incredibly robust. We spend very little time triaging these tests and their success rate surpassed our expectations. When failures do occur, we have a small team of Ranorex developers who own triage to determine if the script failed or if the failure was a genuine bug in the software.

Tying It Together

Our development pipeline is designed to continually test the vRad platform. Each change to our code base triggers half a dozen different test paths incorporating thousands of automated tests. This enables us to deliver features faster and with higher quality.

I hope you enjoyed this look into the vRad approach to building code; stay tuned for our next article on vRad Test Environments (#3). And remember, we’ll be tracking all 7 keys to unlocking a DevOps Culture, as they’re released, in the vRad Technology Quest Log.

Until our next adventure,

Brian (Bobby) Baker