vRad’s philosophy around frequently deploying software updates relies heavily on test automation, which ensures adequate test coverage for each release. Test automation is one of the most challenging aspects of software development at vRad; and we’ve tackled it in a multi-pronged approach that is continually evolving.

|

Test Automation: Finding ways to test software in an automated fashion to increase breadth and depth of coverage. Basically, writing software to test software. |

We have two basic tenets at vRad for test automation:

- An automated test is not an automated test unless it successfully runs at least nightly.

- We love our manual testers and we will never, ever replace them with automated tests.

We Love Manual Testers

I simply cannot repeat this frequently enough: test automation is not a replacement for manual tests and we place an extremely high value on manual testing at vRad.

Some organizations will adopt automated testing with the goal of eliminating manual tests. Perhaps they are trying to automate all manual tests or their managers use automation as a metric for success. vRad does not do that. We, quite strongly, believe that no matter how much automated testing is in place, having people dedicated to ensuring quality is of the utmost importance. This ranges from ensuring developers follow standards and processes, to spending a lot of time on exploratory testing.

Our test team at vRad includes manual testing. The team executes hundreds of tests for each monthly software release that test automation simply cannot perform, such as:

- Teasing out software issues;

- Thinking like end-users;

- And performing hours of exploratory testing.

Basically, we tell our Software Quality Assurance (SQA) to break things!

And on the rare occasions when a bug does get into production, we do not blame SQA. All team members are responsible for quality.

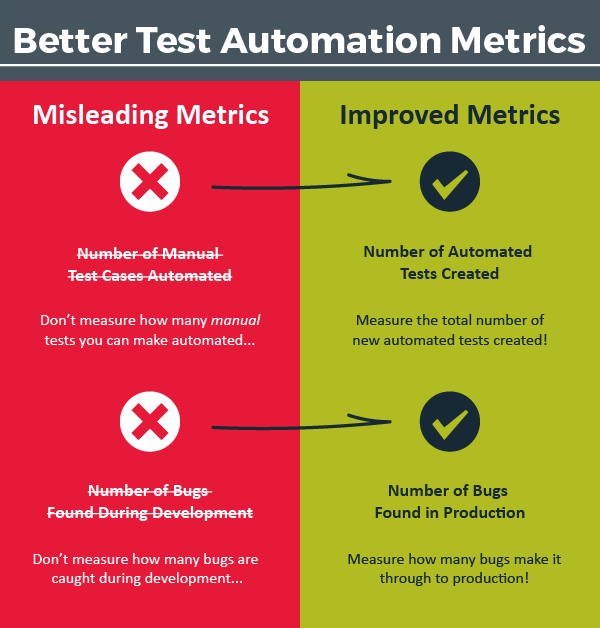

What Not to Measure

Before we discuss the types of tests we have, and dive into specific patterns, it’s important to spend time discussing metrics. Automation in general has a tendency to appear frightening. I often hear concerns that automation will take jobs away; in my experience a significant amount of automation actually creates opportunities rather than takes them away. Automation isn’t simply a matter of automating manual work – it supplements manual work.

From my perspective, a significant amount of automation anxiety is caused by the usage of misleading metrics; two of these metrics that I see frequently are:

- Number of Manual Test Cases Automated.

- Number of Bugs Found During Development.

Misleading Measure #1: Number of Manual Test Cases Automated

Measuring the number of manual test cases automated is very tempting – so tempting that even though I caution against using it, I capture this metric at vRad! The temptation is pretty simple: the goal of automation is to remove manual labor, so tracking how quickly we automate will show how fast we’re saving money!

I challenge that goal.

Approaching test automation with a goal of simply removing manual labor often backfires for myriad reasons:

- New test cases are constantly being added alongside new features.

- Some manual test cases are simply too complex to automate.

- Focusing on taking manual tests and making them automatic can result in a lot of lost opportunity for simpler, more focused tests.

At vRad our two primary goals with test automation are to:

- Increase the breadth of testing by testing all applications more broadly with each release.

- Increase the depth of testing by testing each application more thoroughly with each release.

While we do automate manual tests, replacing manual tests is not our primary objective – there are many manual tests our team may never automate.

I advise against managing to this metric, even if you choose to track it. At vRad, we do track how many manual test cases are automated – we go back and mark those tests as automated to avoid duplicate work – but we rarely chart or share it. We measure success through other metrics instead.

So, what does vRad measure?

Improved Measure #1: Number of Automated Tests Created

A large number of our automated tests were never manual to begin with – at least not as a defined manual test. Many of our automated tests are focused on specific functions, pages, buttons, and fields; for instance, we might test that a text box for “First Name” accepts various characters, has a certain field length, etc. While manual testers test this, we often don’t get this granular in our written Test Cases. Instead, this typically would occur during exploratory testing – trying to break things!

By not managing to the number of manual tests automated, it may seem that we’re not freeing-up the SQA team at all. This is partly true – our main focus isn’t reducing the number of manual tests run, primarily because manual testers do a lot more than run defined manual tests. In addition, most of our testers, even long after they have exhausted running all the tests they can think of, still spend a lot of time performing exploratory testing. In a sense, we’re never done testing. By adding automated tests, we reduce manual testing in several core ways:

- Testers are less frequently blocked because basic workflow tests, known as “happy path” tests, are validated with automation. This is a significant gain for manual testers as they spend far less time with an environment that isn’t ready for testing.

- Issues are found before testers get to them. Without automated tests, manual testers would spend more time writing up bugs and triaging failures that automation would have caught.

- Tests are constantly run, so when issues are found in the automated tests there is far less manual retesting.

- Manual testers work alongside automation engineers – and they are familiar with the automated tests. This gives these professionals a good feel for where they want to spend more time testing in an explorative fashion, and where they can trust that basic functionality remains solid.

- We automate some manual tests. While we don’t manage towards this, we do automate manual tests, freeing up time.

Misleading Measure #2: Number of Bugs Found During Development

This metric is common for both manual testing and automated testing. And, again, this metric can be very tempting.

There’s a common piece of wisdom claiming that “the sooner bugs are found, the cheaper they are to fix.” For instance, if you can find a bug during design time, it will be much cheaper to adjust the design before building software than it will be to fix the bug once the software is already built.

This adage is partially correct. It is better to catch the bugs sooner rather than later. The flaw lies in the implication: if you focus on catching bugs early, you don’t have to test as much later.

Imagine that you are building a store application to sell toy cars. The team gets together through the various stages of development – requirements gathering, design, coding, feature testing – and works extra hard to make sure all bugs are worked out of the system as early as possible.

Regression of the application takes two days. There are two potential outcomes:

- In a perfect world, the team spends the two days performing regression on the store front application and they find zero bugs!

- Realistically, let’s say the team finds 2 bugs, perhaps it still takes two days for regression, or maybe it adds an hour of retesting around that bug.

You see, regardless of finding a few bugs or not, regression needs to occur! The team will not know if there are bugs unless the application is tested. For this reason, it is frequently simpler to look at regression testing as a fixed cost; catching bugs early is a positive thing and does reduce cost, but the majority of the cost is fixed anyway!

At vRad, we choose not to manage around the number of bugs found during the development process – the teams themselves have a good instinct towards the quality of code being produced, if there are too many bugs from a certain developer or if a process change would help.

Instead of managing towards bugs found in development, we focus on…

Improved Measure #2: Number of Bugs Found in Production

We take the quality of our production environment very seriously – each bug that is found is tracked, the software engineering leadership reviews each one and then they discuss what can be done to avoid that bug (or type of bug) in the future. This often results in new test cases, additional test automation in specific areas or enabling better testing through new processes.

Bugs in production are an inevitability, but many can be avoided. By focusing our testing on the end product, we reward our team for polish instead of punishing them for the diligence of our SQA team finding bugs during development.

Let’s recap our improved metrics:

Types of Automated Testing

Now that we’ve established a few pitfalls to avoid, let’s take a deeper look at the actual tests we build at vRad.

Our automated tests are broken into three categories:

- Unit Tests

- Integration Tests

- Unser Interface (UI) Tests

Unit Tests

If you aren’t familiar with unit tests, the idea is to test a small chunk of code, without interacting with a large part of the platform; or rather just a single function. You might have a function called “GetText” that returns “Hello World”. In this case, a unit test might validate that the correct string is returned.

They’re designed to be fast. Developers generally run them on their PC as they develop. Unit tests also run continuously – often with every continuous integration (see how in vRad Development Pipeline (#2)).

vRad’s unit tests are fairly standard and there are lots of great resources on the internet if you are interested in learning more about unit testing (http://www.nunit.org/index.php?p=quickStart&r=2.6.4 , https://www.youtube.com/watch?v=1TPZetHaZ-A ).

Integration Tests

Integration tests, unlike unit tests, test deeper into the platform, but generally do not interact with the UI. For example, these tests might test code that reaches into our databases; they might control a service running on a server to stop it and start it.

Because integration tests actually change data, we’ve developed a few fail safes for handling this mechanic. Some of our tests use a special copy of the databases that is restored for each test case – that way, each test has a known set of data. Many of our integration tests will create or update any necessary data required for the test. We have a rich set of APIs that enable us to control our data, drive our configurations, and use quite a bit of the software that makes up the platform.

There are many philosophies on the best ways to write automated tests, particularly when it comes to changing data. At vRad, each of our sub systems (Biz, RIS, PACS) has a different purpose and therefore vary in architecture and design. In our decision to write integration tests (in addition to unit tests), we leverage our wealth of APIs with test hooks to test as much of the platform as we can. Often, we find that unit testing alone isn’t sufficient to cover the depth of a software platform, so we utilize these integration tests to perform deeper, more substantial tests of the platform.

Automated UI Tests

Finally, we have our Automated UI tests. Like many companies, vRad had a couple of false starts prior to success with automated UI tests. We use a product called Ranorex (http://www.ranorex.com/) to drive our tests. Our initial attempts relied quite a bit on “record and play” functionality. This is what it sounds like: a user will record actions and then that recording become the test – to run the test you simply replay the recording.

As might be expected, the record and playback method worked at first, when we had a small number of tests. But soon, the maintenance of those tests became unmanageable – there was too much flux inside of the applications to maintain the tests, which meant re-recording the processes. And the data we used for tests ended up being strewn across hundreds of modules of the recordings.

Our second attempt has proven to be more robust. We decided to utilize a “data-driven” approach based on a Page-Object design pattern. That’s a lot of buzzwords, so let me explain. First, we don’t do any record-and-play anymore. Instead, we break out applications into pages (or screens). For each page we develop a repository and an “object”; a repository is just the “paths” to each item, such as a button or a text box; and an “object” refers to a chunk of code that describes the page functions – the fields and buttons, what they do, what can be clicked, and so on and so forth.

We develop test patterns based on the actions that can be taken for each page. For instance, an order page might be able to fill out a name and other information and be submitted back to the server to create the order. The user might then land on another page that is a list of the orders she has made. Our test pattern would consist of those actions – filling out the information, submitting the order, and looking for the order on the list.

To test scenarios, we feed these test patterns data. Each data set given to a test pattern is considered a test case. It is easy to quickly have thousands of test cases. We manage this test data in a database with a small custom UI.

This approach is much more maintainable than record-and-play. In fact, we spend so little time maintaining the tests that it surprises us quite frequently. They simply work.

And recall that we don’t consider something an automated test unless it runs at least nightly. All of our UI based automated testing runs at night and each morning we triage these tests. Using the Page-Object patterns, we spend very little time actually doing triage and most of the day is spent continuing to build out our test automation for new pages and applications.

What Happens When Tests Break?

At vRad, quality is paramount, so fixing tests is too. We run our tests at least nightly – and often more frequently. Keeping them working can be a challenge at first – tests start out brittle and might break frequently; but as our team monitors the tests and fixes them, they become more resilient with each iteration.

We’ve been pleasantly surprised at vRad with the results of our vigilance. We now have thousands of tests that run at least nightly and simply work. It can be a daunting task to run, triage and fix tests to always pass; but the result of having stable tests is worth the effort!

Let’s Recap.

Our automation efforts focus on measuring towards our values – adding more and more automated tests and reducing the number of bugs found in production. Test Automation at vRad is not about replacing manual tests or manual testers – rather, we deliver higher quality, faster, by increasing our testing depth and breadth through test automation.

Product, systems, environments, and code bases in general tend to gain complexity with time – a customer wants a configuration for this or the Ops team needs to be able to throttle that. Adding in robust test automation is a key component to how vRad scales with our customer’s needs and the features of our platform.

Like most organizations, we still do not have enough automated testing. We are continually asking ourselves how we can write more automated tests and how we can run our automated tests faster. But all the progress we’ve made has enabled us to improve our quality and release data more frequently. The investment is worth it!

Thanks for joining us on this journey through test automation, and stay tuned for our next post on vRad’s Build Tools (#5). And remember, we’ll be tracking all 7 keys to unlocking a DevOps Culture, as they’re released, in the vRad Technology Quest Log.

Until our next adventure,

Brian (Bobby) Baker